Software-Defined Networking-Based Campus Networks Via Deep Reinforcement Learning Algorithms: The Case of University of Technology

Main Article Content

Abstract

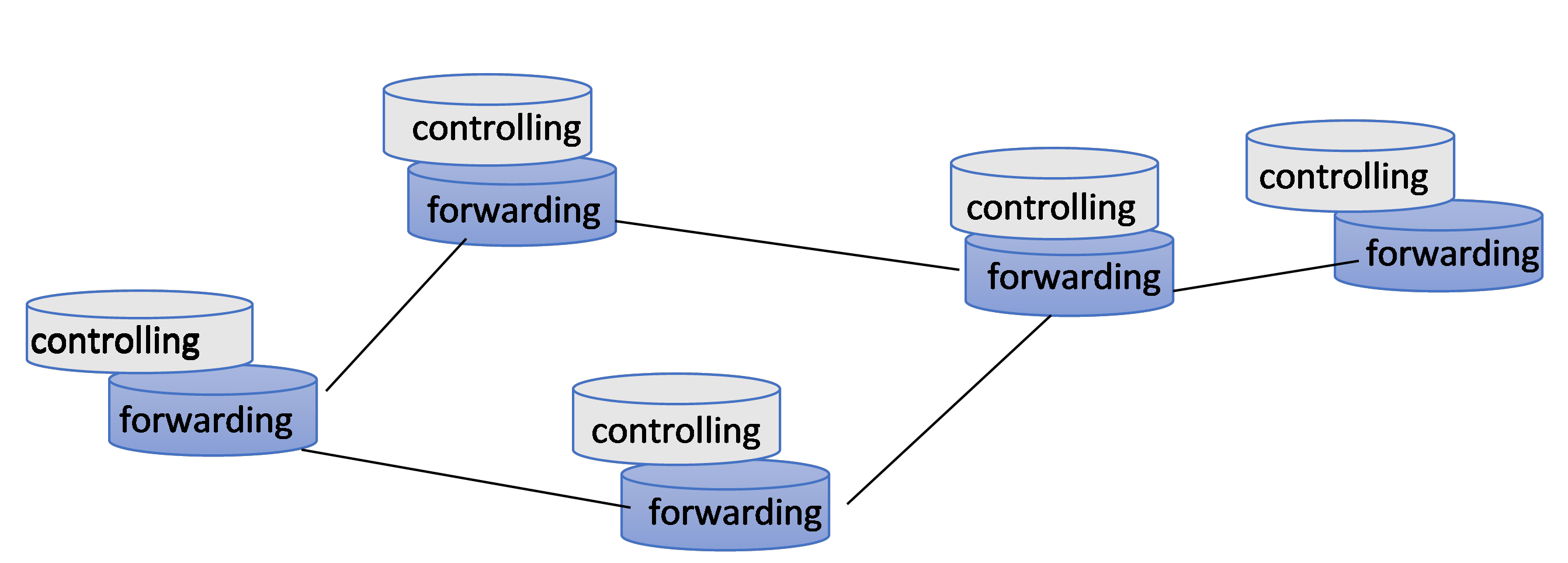

As a consequence of the COVID-19 pandemic, networks need to be adopted to satisfy the new situation. People have been introduced to new modes of working from home, attending teleconferences, and taking part in e-learning. Other technologies, including smart cities, the Internet of Things, and simulation tools, have also seen a rise in demand. In the new situation, the network most affected is the campus network. Fortunately, a powerful and flexible network model called the software-defined network (SDN) is currently being standardized. SDN can significantly improve the performance of campus networks. Consequently, many scholars and experts have focused on enhancing campus networks via SDN technology.

Integrating deep reinforcement learning (DRL) with SDN is pivotal for advancing the quality of service (QoS) of contemporary networks. Their integration enables real-time collaboration, intelligent decision making, and optimized traffic flow and resource allocation.

The system proposed in this research is a DRL algorithm applied to a campus network—the University of Technology—and investigated as a case study. The proposed system employs a two-method approach for optimizing the QoS of a network. First, the system classifies service types and directs TCP traffic by using a deep Q-network (DQN) for intelligent routing; then, UDP traffic is managed using the Dijkstra algorithm for shortest-path selection. This hybrid model leverages the strengths of machine learning and classical algorithms to ensure efficient resource allocation and high-quality data transmission. The system combines the adaptability of DQN with the proven reliability of the Dijkstra algorithm to enhance dynamically the network performance.

The proposed hybrid system, which used DQN for TCP traffic and the Dijkstra algorithm for UDP traffic, was benchmarked against two other algorithms. The first algorithm was an advanced version of the Dijkstra algorithm that was designed specifically for this study. The second algorithm involved a Q-learning (QL)-based approach. The evaluation metrics included throughput and latency. Tests were conducted under various topologies and load conditions.

The research findings revealed a clear advantage of the hybrid system in complex network topologies under heavy-load conditions. The throughput of the proposed system was 30% higher than the advanced Dijkstra and QL algorithms. The latency benefits were pronounced, with a 50% improvement over the competing algorithms.