Deep Learning-Based Speech Emotion Recognition Using Librosa

Main Article Content

Abstract

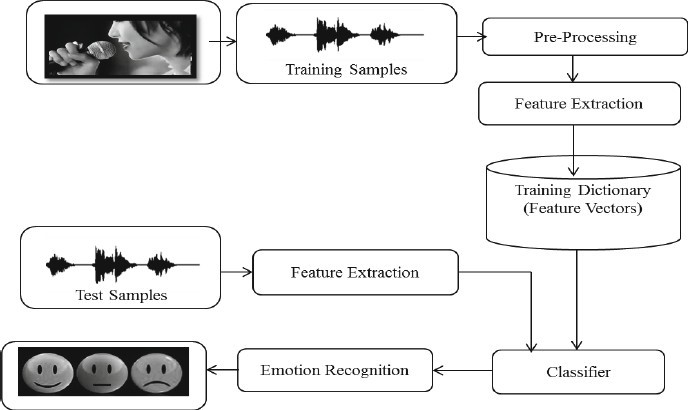

Speech Emotion Recognition is a challenge of computational paralinguistic and speech processing that tries to identify and classify the emotions expressed in spoken language. The objective is to infer from a speaker's speech patterns, such as prosody, pitch, and rhythm, their emotional state, such as happiness, rage, sadness, or frustration. In the modern world, one of the most crucial marketing tactics is emotion detection. For a person, you might tailor several things in order to best fit their interests. Due to this, we made the decision to work on a project where we could identify a person's emotions based just on their speech, allowing us to handle a variety of AI-related applications. Examples include the ability of call centers to play music during tense exchanges. Another example might be a smart automobile that slows down when someone is scared or furious. In Python, we processed and extracted features from the audio files using the Librosa module. A Python library for audio and music analysis is called Librosa. It offers the fundamental components required to develop systems for retrieving music-related information. Because of this, there is a lot of potential for this kind of application in the market that would help businesses and ensure customer safety.

Article Details

References

Samuel Kakuba, Alwin Poulose & Dong Seog Han Deep Learning - Based Speech Emotion Recognition UsingnMulti - Level Fusion of Concurrent Features

Bagus Tris Atmaja, Akira Sasou. Speech Emotion and Naturalness Recognitions with Multitask and Single-Task Learnings (IEEE-2022)

Chenghao Zhang. Autoencoder With Emotion Embedding for Speech Emotion Recognition. (IEEE-2021)

Jennifer Santoso, Takeshi Yamada, Kenkichi Ishizuka. Speech Emotion Recognition Based on Self-Attention Weight Correction for Acoustic and Text Features. (IEEE-2022)

Ting-Wei Sun. EndtoEnd Speech Emotion Recognition with Gender Information (IEEE-2020)

Xiaohan Xia, Dongmei Jiang, Hichem Sahli. Learning Salient Segments for Speech Emotion Recognition Using Attentive Temporal Pooling (IEEE-2020)

Felicia Andayani, Lau Bee Theng, Mark Teekit Tsun, Caslon Chua. Hybrid LSTM-Transformer Model for Emotion Recognition from Speech Audio Files. (IEEE-2022)

Karam Kumar Sahoo, Ishan Dutta, Muhammad Fazal Ijaz, Marcin Wo?niak, Pawan Kumar Singh. TLEFuzzyNet: Fuzzy Rank-Based Ensemble of Transfer Learning Modelsfor Emotion Recognition from Human Speeches. (IEEE-2021)

Shunzhi Yang, Zheng Gong, Kai Ye. EdgeRNN A Compact Speech Recognition Network with Spatio-Temporal Features for Edge Computing. (IEEE-2021)

Taiba Majid Wani, Teddy Surya Gunawan, Syed Asif Ahmad Qadri. A Comprehensive Review of Speech Emotion Recognition Systems. (IEEE- 2021)

Jorge Oliveira, Isabel Praça. On the Usage of Pre- Trained Speech Recognition Deep Layers to Detect Emotions. (IEEE- 2021)

Danai Styliani Moschona. An Affective Service based on Multi- Modal Emotion Recognition, using EEG enabled Emotion Tracking and Speech Emotion Recognition. (IEEE-2020)

Ryota Sato;Ryohei Sasaki;Norisato Suga;Toshihiro Furukawa. Creation and Analysis of Emotional Speech Database for Multiple Emotions Recognition. (IEEE-2020)

Misaki Sakurai;Tetsuo Kosaka. Emotion Recognition Combining Acoustic and Linguistic Features Based on Speech Recognition Results. (IEEE-2021)

Yuanchao Li;Peter Bell;Catherine Lai. Fusing ASR Outputs in Joint Training for Speech Emotion Recognition. (IEEE-2022).