Enhancing Sign Language Recognition through Fusion of CNN Models

Main Article Content

Abstract

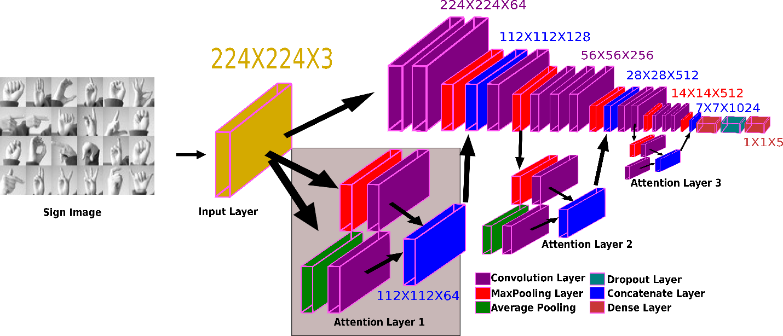

This study introduces a pioneering hybrid model designed for the recognition of sign language, with a specific focus on American Sign Language (ASL) and Indian Sign Language (ISL). Departing from traditional machine learning methods, the model ingeniously blends hand-crafted techniques with deep learning approaches to surmount inherent limitations. Notably, the hybrid model achieves an exceptional accuracy rate of 96% for ASL and 97% for ISL, surpassing the typical 90-93% accuracy rates of previous models. This breakthrough underscores the efficacy of combining predefined features and rules with neural networks. What sets this hybrid model apart is its versatility in recognizing both ASL and ISL signs, addressing the global variations in sign languages. The elevated accuracy levels make it a practical and accessible tool for the hearing-impaired community. This has significant implications for real-world applications, particularly in education, healthcare, and various contexts where improved communication between hearing-impaired individuals and others is paramount. The study represents a noteworthy stride in sign language recognition, presenting a hybrid model that excels in accurately identifying ASL and ISL signs, thereby contributing to the advancement of communication and inclusivity.