ATiTHi: A Deep Learning Approach for Tourist Destination Classification using Hybrid Parametric Optimization

Main Article Content

Abstract

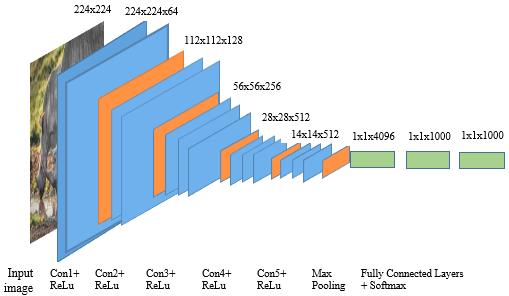

A picture is best way to explore the tourist destination by visual content. The content-based image classification of tourist destinations makes it possible to understand the tourism liking by providing a more satisfactory tour. It also provides an important reference for tourist destination marketing. To enhance the competitiveness of the tourism market in India, this research proposes an innovative tourist spot identification mechanism by identifying the content of significant numbers of tourist photos using convolutional neural network (CNN) approach. It overcomes the limitations of manual approaches by recognizing visual information in photos. In this study, six thousand photos from different tourist destinations of India were identified and categorized into six major categories to form a new dataset of Indian Trajectory. This research employed Transfer learning (TF) strategies which help to obtain a good performance measure with very small dataset for image classification.VGG-16, VGG-19, MobileNetV2, InceptionV3, ResNet-50 and AlexNet CNN model with pretrained weight from ImageNet dataset was used for initialization and then an adapted classifier was used to classify tourist destination images from the newly prepared dataset. Hybrid hyperparameter optimization employ to find out hyperparameter for proposed Atithi model which lead to more efficient model in classification. To analyse and compare the performance of the models, known performance indicators were selected. As compared to the AlexNet model (0.83), MobileNetV2(0.93), VGG-19(0.918), InceptionV3(0.89), ResNet-50(0.852) the VGG16 model has performed the best in terms of accuracy (0.95). These results show the effectiveness of the current model in tourist destination image classification.

Article Details

References

X. Xiao, C. Fang, and H. Lin, “Characterizing tourism destination image using photos’ visual content,” ISPRS Int. J. Geo-Information, vol. 9, no. 12, 2020, doi: 10.3390/ijgi9120730.

J. L. Crompton, “An Assessment of the Image of Mexico as a Vacation Destination and the Influence of Geographical Location Upon That Image,” J. Travel Res., vol. 17, no. 4, pp. 18–23, 1979, doi: 10.1177/004728757901700404.

T. Bhosale and S. Pushkar, “Tourist spot classification using convolution neural network,” SSRN Electron. J., 2021, doi: 10.2139/ssrn.3884676.

H. Kim and S. Stepchenkova, “Effect of tourist photographs on attitudes towards destination: Manifest and latent content,” Tour. Manag., vol. 49, pp. 29–41, 2015, doi: 10.1016/j.tourman.2015.02.004.

J. Li, L. Xu, L. Tang, S. Wang, and L. Li, “Big data in tourism research: A literature review,” Tour. Manag., vol. 68, pp. 301–323, 2018, doi: 10.1016/j.tourman.2018.03.009.

H. Li, J. Wang, M. Tang, and X. Li, “Polarization-dependent effects of an Airy beam due to the spin-orbit coupling,” J. Opt. Soc. Am. A Opt. Image Sci. Vis., vol. 34, no. 7, pp. 1114–1118, 2017, doi: 10.1002/ecs2.1832.

N. D. Hoang and V. D. Tran, “Image Processing-Based Detection of Pipe Corrosion Using Texture Analysis and Metaheuristic-Optimized Machine Learning Approach,” Comput. Intell. Neurosci., vol. 2019, 2019, doi: 10.1155/2019/8097213.

K. Simonyan and A. Zisserman, “Very deep convolutional networks for large-scale image recognition,” 3rd Int. Conf. Learn. Represent. ICLR 2015 - Conf. Track Proc., pp. 1–14, 2015.

D. Kim, Y. Kang, Y. Park, N. Kim, and J. Lee, “Understanding tourists’ urban images with geotagged photos using convolutional neural networks,” Spat. Inf. Res., vol. 28, no. 2, pp. 241–255, 2020, doi: 10.1007/s41324-019-00285-x.

J. C. Cepeda-Pacheco and M. C. Domingo, “Deep learning and Internet of Things for tourist attraction recommendations in smart cities,” Neural Comput. Appl., vol. 34, no. 10, pp. 7691–7709, 2022, doi: 10.1007/s00521-021-06872-0.

B. Zhou, A. Lapedriza, A. Khosla, A. Oliva, and A. Torralba, “Places: A 10 Million Image Database for Scene Recognition,” IEEE Trans. Pattern Anal. Mach. Intell., vol. 40, no. 6, pp. 1452–1464, 2018, doi: 10.1109/TPAMI.2017.2723009.

F. Sheng, Y. Zhang, C. Shi, M. Qiu, and S. Yao, “Xi’an tourism destination image analysis via deep learning,” J. Ambient Intell. Humaniz. Comput., vol. 13, no. 11, pp. 5093–5102, 2022, doi: 10.1007/s12652-020-02344-w.

M. Figueredo et al., “From photos to travel itinerary: A tourism recommender system for smart tourism destination,” Proc. - IEEE 4th Int. Conf. Big Data Comput. Serv. Appl. BigDataService 2018, pp. 85–92, 2018, doi: 10.1109/BigDataService.2018.00021.

A. Derdouri and T. Osaragi, A machine learning-based approach for classifying tourists and locals using geotagged photos: the case of Tokyo, vol. 23, no. 4. Springer Berlin Heidelberg, 2021.

Y. C. Chen, K. M. Yu, T. H. Kao, and H. L. Hsieh, “Deep learning based real-time tourist spots detection and recognition mechanism,” Sci. Prog., vol. 104, no. 3_suppl, pp. 1–19, 2021, doi: 10.1177/00368504211044228.

R. Wang, J. Luo, and S. (Sam) Huang, “Developing an artificial intelligence framework for online destination image photos identification,” J. Destin. Mark. Manag., vol. 18, no. August, p. 100512, 2020, doi: 10.1016/j.jdmm.2020.100512.

Y. Li, X. Li, and C. Yue, “Recognition of Tourist Attractions,” pp. 1–5, 2016.

K. Zhang, Y. Chen, and C. Li, “Discovering the tourists’ behaviors and perceptions in a tourism destination by analyzing photos’ visual content with a computer deep learning model: The case of Beijing,” Tour. Manag., vol. 75, no. May, pp. 595–608, 2019, doi: 10.1016/j.tourman.2019.07.002.

M. Chen, D. Arribas-Bel, and A. Singleton, “Quantifying the characteristics of the local urban environment through geotagged flickr photographs and image recognition,” ISPRS Int. J. Geo-Information, vol. 9, no. 4, 2020, doi: 10.3390/ijgi9040264.

P. Roy, J. H. Setu, A. N. Binti, F. Y. Koly, and N. Jahan, “Tourist Spot Recognition Using Machine Learning Algorithms,” Lect. Notes Data Eng. Commun. Technol., vol. 131, no. January, pp. 99–110, 2023, doi: 10.1007/978-981-19-1844-5_9.

C. Shorten and T. M. Khoshgoftaar, “A survey on Image Data Augmentation for Deep Learning,” J. Big Data, vol. 6, no. 1, 2019, doi: 10.1186/s40537-019-0197-0.

M. Abadi et al., “TensorFlow: Large-Scale Machine Learning on Heterogeneous Distributed Systems,” 2016, [Online]. Available: http://arxiv.org/abs/1603.04467.

J. Bergstra and Y. Bengio, “Random search for hyper-parameter optimization,” J. Mach. Learn. Res., vol. 13, pp. 281–305, 2012.

H. Jiang and E. Learned-Miller, “Face Detection with the Faster R-CNN,” Proc. - 12th IEEE Int. Conf. Autom. Face Gesture Recognition, FG 2017 - 1st Int. Work. Adapt. Shot Learn. Gesture Underst. Prod. ASL4GUP 2017, Biometrics Wild, Bwild 2017, Heterog. Face Recognition, HFR 2017, Jt. Chall. Domin. Complement. Emot. Recognit. Using Micro Emot. Featur. Head-Pose Estim. DCER HPE 2017 3rd Facial Expr. Recognit. Anal. Challenge, FERA 2017, pp. 650–657, 2017, doi: 10.1109/FG.2017.82.

D. P. Kingma and J. L. Ba, “Adam: A method for stochastic optimization,” 3rd Int. Conf. Learn. Represent. ICLR 2015 - Conf. Track Proc., pp. 1–15, 2015.

P. R. Chandre, P. N. Mahalle, and G. R. Shinde, “Machine Learning Based Novel Approach for Intrusion Detection and Prevention System: A Tool Based Verification,” in 2018 IEEE Global Conference on Wireless Computing and Networking (GCWCN), Nov. 2018, pp. 135–140, doi: 10.1109/GCWCN.2018.8668618.

P. R. Chandre, “Intrusion Prevention Framework for WSN using Deep CNN,” vol. 12, no. 6, pp. 3567–3572, 2021.

P. Chandre, P. Mahalle, and G. Shinde, “Intrusion prevention system using convolutional neural network for wireless sensor network,” IAES Int. J. Artif. Intell., vol. 11, no. 2, pp. 504–515, 2022, doi: 10.11591/ijai.v11.i2.pp504-515.

R. Kumari, A. Nigam, and S. Pushkar, “Machine learning technique for early detection of Alzheimer’s disease,” Microsyst. Technol., vol. 26, no. 12, pp. 3935–3944, 2020, doi: 10.1007/s00542-020-04888-5.

J. E. Luján-García, C. Yáñez-Márquez, Y. Villuendas-Rey, and O. Camacho-Nieto, “A transfer learning method for pneumonia classification and visualization,” Appl. Sci., vol. 10, no. 8, 2020, doi: 10.3390/APP10082908.

O. Russakovsky et al., “ImageNet Large Scale Visual Recognition Challenge,” Int. J. Comput. Vis., vol. 115, no. 3, pp. 211–252, 2015, doi: 10.1007/s11263-015-0816-y.

C. Szegedy, V. Vanhoucke, S. Ioffe, J. Shlens, and Z. Wojna, “Rethinking the Inception Architecture for Computer Vision,” Proc. IEEE Comput. Soc. Conf. Comput. Vis. Pattern Recognit., vol. 2016-Decem, pp. 2818–2826, 2016, doi: 10.1109/CVPR.2016.308.

S. Targ, D. Almeida, and K. Lyman, “Resnet in Resnet: Generalizing Residual Architectures,” pp. 1–7, 2016, [Online]. Available: http://arxiv.org/abs/1603.08029.

M. Sandler, A. Howard, M. Zhu, A. Zhmoginov, and L. C. Chen, “MobileNetV2: Inverted Residuals and Linear Bottlenecks,” Proc. IEEE Comput. Soc. Conf. Comput. Vis. Pattern Recognit., pp. 4510–4520, 2018, doi: 10.1109/CVPR.2018.00474.