RAU: Novel Activation Function for Deep Learning Neural Network

Main Article Content

Abstract

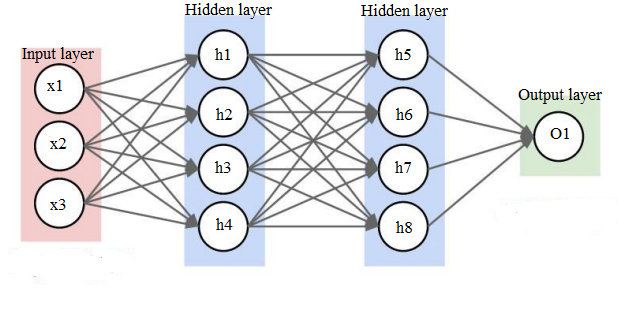

In Deep learning neural networks (DNNs) activation functions perform a vital role. In each neuron activation function is responsible for generating output signals from given input signals. Hence, activation function is one of the factors that influence the performance of DNN. A novel activation unit RAU (Reciprocal activation unit) is proposed in this paper. Most of the popular algorithms given more importance to positive signals, but proposed method handles the negative and positive inputs equally. The proposed RAU tested with both multiclassification and binary classification datasets. Iris flower and Wisconsin Breast Cancer datasets are used for the analysis. In Breast cancer dataset RAU provides 99.25% and 97.08% accuracy for classification of train and test sets respectively. In Iris dataset RAU provides 99.05% and 97.78% accuracy for the classification of train and test sets. Analysis of the same datasets are performed with the existing activation functions- Sigmoid, RMAF, Swish, Tanh and ReLU. Results showed that RAU performed better than other activation functions.

Article Details

References

Ravindra B V, N.Sriram,M.Geetha, “Chronic Kidney disease detection using back propagation neural network classifier”, International Confererence on Communication,Computing and Internet of Things,18528464 , Febuary 2018,pp 65-68

Ibrahim M Nasser, “Predicting whether a couple is get divorced or not using neural network”,International journal of engineering and information Systems,Vol 3 ,Issue 10,pp 89-95,October 2019

Jianli Feng, Shengnan Lu,”Performance Analysis of Various Activation Functions in Artificial Neural Networks” IOP Conf. Series: Journal of Physics: Conf. Series 1237 ,2019

Akhilesh A Waoo, Brijesh K Soni,” Performance Analysis of Sigmoid and ReLU activation functions in Deep Neural Netwok”, Algorithms for intelliget systems book series (AIS) ,22 July 2021

Junxi Feng, Xiaohai He, Oizhi Teng and Chao Ren ,” Reconstruction of Porous media from extremely limited information using unconditional generative adversarial networks”,Phuysical Riview, Vol 100, no 3, Sep 2019,

Ramachandran, B. Zoph, and Q. V. Le, ‘‘Searching for activation functions,’’ 2017, arXiv:1710.05941. [Online].

Maas, A. L., Hannun, A. Y., & Ng, A. Y. “Rectifier nonlinearities improve neural network acoustic models”. In Proc. Icml,2013

Yongbin Yu, Kwabena Adu , Nyima Tashi, Patrick Anokye, Xiangxiang Wang,Mighty Abra Ayidzoe P,”RMAF: Relu-Memristor-Like Activation Function for Deep Learning”,IEE Access, April 2020

D.A. Clevert, T. Unterthiner, and S. Hochreiter, ‘‘Fast and accurate deep network learning by exponential linear units (ELUs),’’ 2015, arXiv:1511.07289. [Online]

V. Nair and G. E. Hinton, ‘‘Rectified linear units improve restricted Boltzmann machines,’’ in Proc. 27th Int. Conf. Mach. Learn. (ICML), 2010, p. 807–814.

Hendrycks and K. Gimpel, ‘‘Gaussian error linear units (GELUs),’’ 2016, arXiv:1606.08415. [Online].

Macêdo, C. Zanchettin, A. L. I. Oliveira, and T. Ludermir, ‘‘Enhancing batch normalized convolutional networks using displaced rectifier linear units: A systematic comparative study,’’ Expert Syst. Appl., vol. 124, pp. 271–281, Jun. 2019

S. Kumar Roy, S. Manna, S. R. Dubey, and B. B. Chaudhuri, ‘‘LiSHT: Non-parametric linearly scaled hyperbolic tangent activation function for neural networks,’’ 2019, arXiv:1901.05894. [Online]. Available: http://arxiv.org/abs/1901.05894

L. Zhang and P. N. Suganthan, ‘‘Random forests with ensemble of feature spaces,’’ Pattern Recognition., Vol. 47, no. 10, pp. 3429–3437, Oct. 2014

Siham A. Mohammed, Sadeq Darrab ,Slah A,Noaman and Gunter Saake ,”Analysis of Breast Cancer detection using different machine learning techniques”, International conference on data mining and big data, Vol.1234, pp.108-117 ,July 2020